A factory can be filled with sensors, dashboards, and live telemetry and still remain operationally blind at the exact moment a costly failure emerges. That is the central contradiction of today’s smart factory landscape.

The industrial challenge is no longer collecting data. The challenge is building an architecture that transforms signals into contextual decisions, routes those decisions into execution systems, and learns from outcomes at scale. That is why many AI + IoT programs generate enthusiasm in pilots but stall in production. The problem is rarely the model. It is the operating architecture around the model.

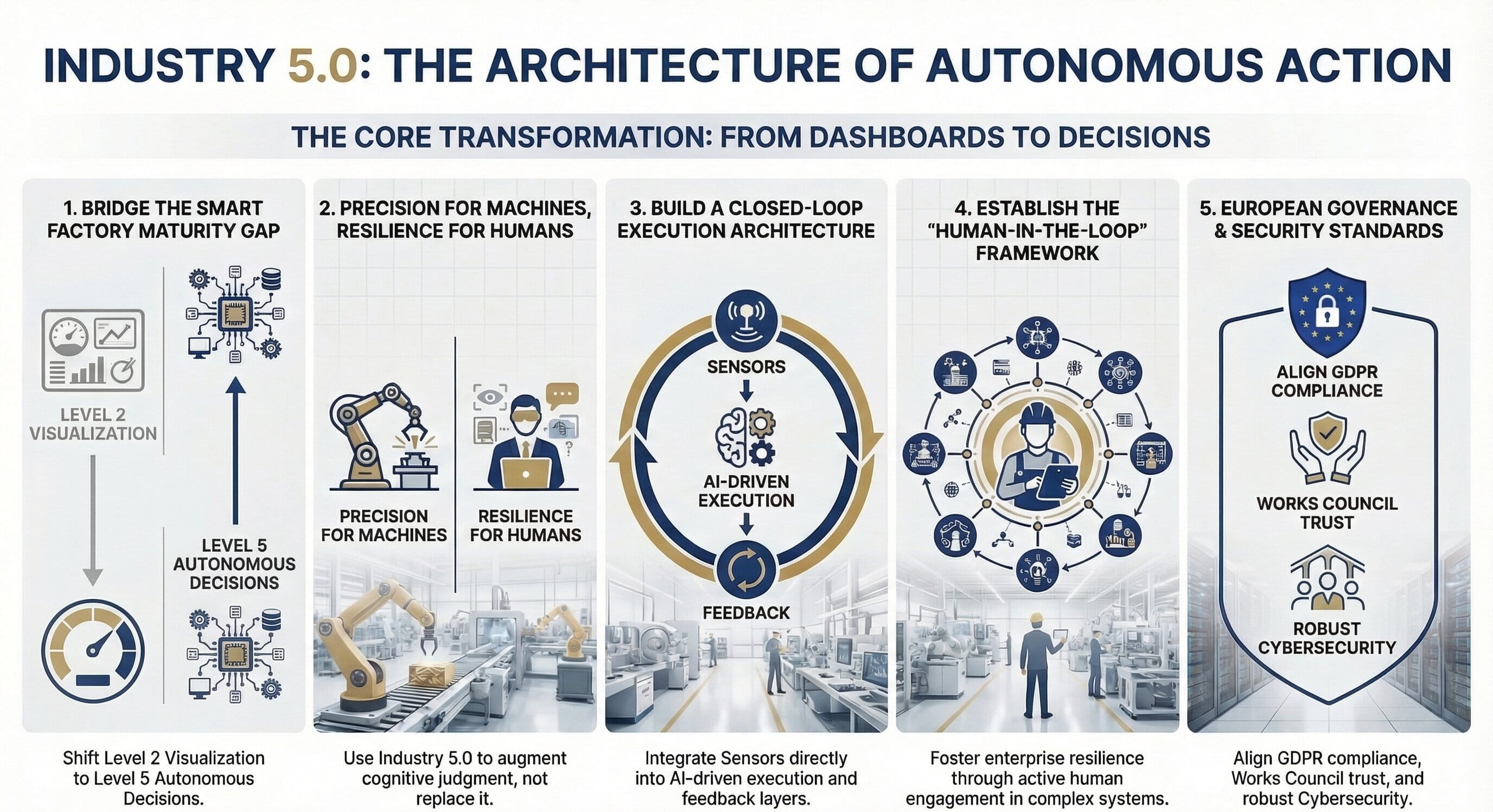

The smart factory maturity gap

Most manufacturers think they are progressing toward autonomy when they are actually parked in an intermediate state: highly instrumented, partially visible, and still deeply manual at the point of decision-making. The gap between visibility and execution is where ROI erodes and confidence disappears.

| Level | Capability | What it looks like in practice | Typical limitation |

|---|---|---|---|

| Level 1 | Data Collection | PLC signals, sensor feeds, machine states captured continuously. | Raw data without context remains ambiguous. |

| Level 2 | Visualization | Dashboards, trends, control-room visibility, reporting. | People still interpret everything manually. |

| Level 3 | Insights & Alerts | Threshold breaches, anomaly flags, predictive indicators. | Alerts create noise unless they trigger action. |

| Level 4 | Decision Support | Systems recommend parameter changes, interventions, or schedules. | Human bottlenecks still constrain scale. |

| Level 5 | Autonomous Decisions | Closed-loop actions with governed feedback and learning. | Requires strong architecture, trust, and governance. |

The architecture that actually changes outcomes

Most industrial initiatives skip straight from tools to expectations. A real smart factory design is not “sensors plus AI.” It is a layered system that creates decision quality, execution reliability, and feedback-based improvement.

Data acquisition

Capture PLC signals, temperature, vibration, pressure, machine states, and operator inputs from heterogeneous assets and frequencies.

Contextualization

Merge machine data with orders, batches, shifts, maintenance history, and process state so the signal becomes operationally meaningful.

Event processing

Evaluate whether a deviation is normal, recurring, risky, or action-worthy rather than merely unusual.

Decision engine

Translate interpreted events into recommended or automated actions such as set-point changes, maintenance triggers, or routing changes.

Execution layer

Push decisions through APIs into MES, ERP, CMMS, or workflow tools so the system acts instead of merely informing.

Feedback loop

Measure whether the action prevented failure, improved throughput, or reduced waste so the system can learn and adapt.

Closed-loop principle

Sensors → Context → AI → Decision → Execution → Feedback is the operating logic that differentiates scalable industrial intelligence from dashboard-heavy digital theatre.

Listen, watch, and scan the visual summary

Want to watch? Video version

Use the embedded video asset for a presentation-friendly walkthrough of the smart factory architecture challenge.

Want to listen? Audio version

Use the audio version when you need a faster executive review or a commute-friendly format.

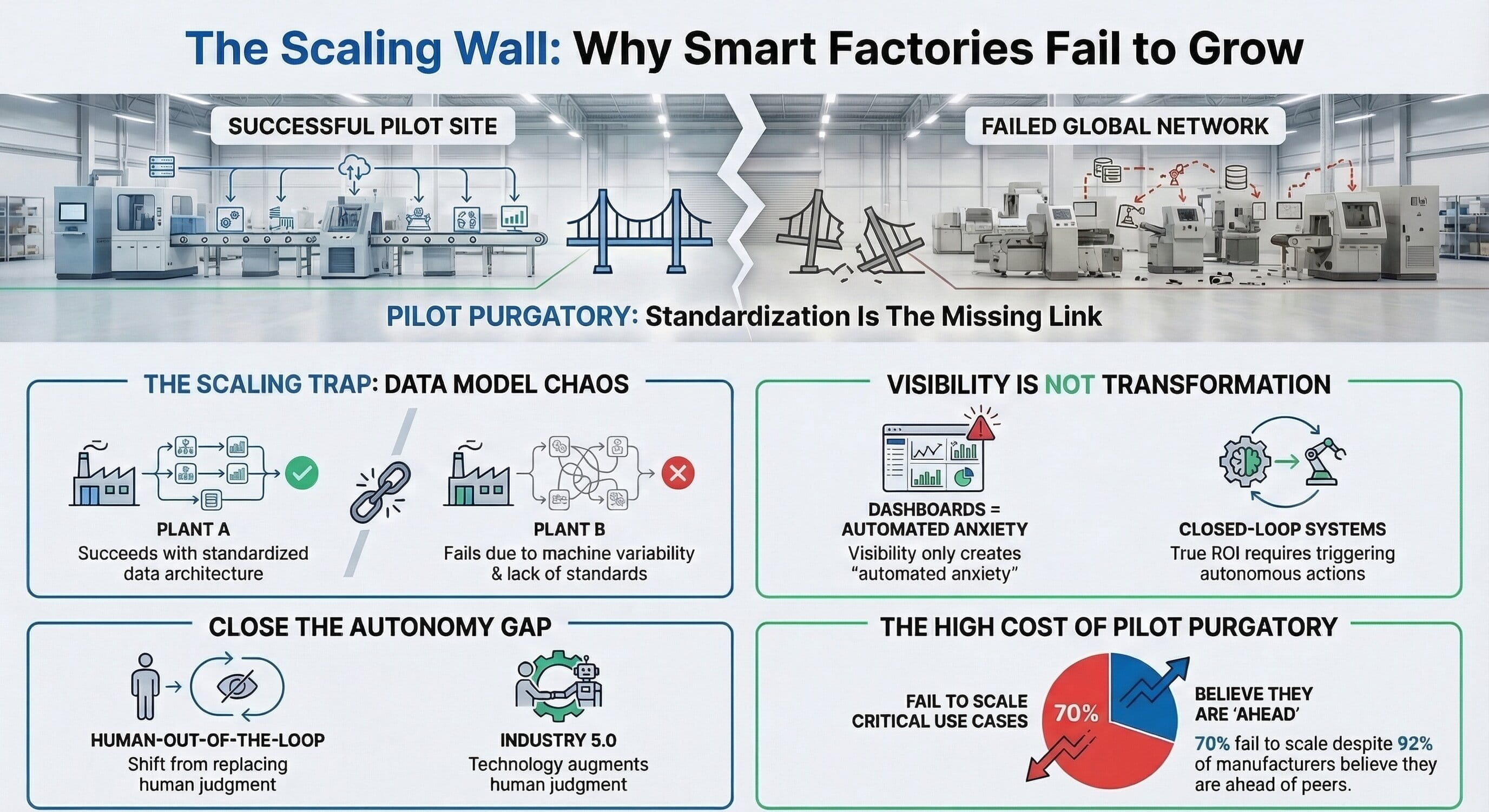

Why AI + IoT projects stall after the pilot phase

Industrial AI programs usually do not collapse in dramatic fashion. They decay through a familiar sequence: early momentum, partial wins, scaling friction, trust erosion, and then quiet operational abandonment.

The default failure pattern

- Excitement: Sensors are installed, dashboards go live, and stakeholders align around a promising pilot.

- Early wins: A few insights emerge, small improvements are visible, and internal confidence grows.

- Scaling attempt: Inconsistent tags, weak integrations, process variability, and infrastructure gaps appear.

- Resistance: Operators stop trusting the output, IT and OT disagree on ownership, and management starts questioning value.

- Silent failure: The system technically remains deployed, but decisions revert to manual habits and the investment becomes background noise.

Shop-floor reality: the human adoption test

One of the biggest mistakes in smart factory programs is assuming everyone values the same outcome. In reality, each audience judges the system against its own practical burdens.

Operators

They care about uptime, predictability, and hitting daily targets. If AI creates more noise, more confusion, or more clicks, it will be ignored regardless of technical sophistication.

Maintenance teams

They do not need more alerts. They need fewer surprises, more planned interventions, and schedules they can trust.

Management

They need measurable ROI, repeatability across sites, reduced operational risk, and confidence that the architecture can scale without reinvention.

Multi-plant scaling is an architecture test, not a rollout task

A system that works in one plant often fails in five because each site exposes hidden assumptions in the design. Machine populations differ. Operator behavior differs. Connectivity differs. Governance maturity differs. Without standard models and strong replication logic, scale becomes expensive reinvention.

Governance, security, and European operating reality

Industrial AI adoption does not depend only on technical quality. It depends on trust, security, and governance credibility. In European manufacturing environments, those elements are not secondary considerations. They are deployment prerequisites.

GDPR-aware data handling

Machine data can intersect with operator behavior and shift patterns. Storage, access, and retention rules therefore matter materially.

Works council alignment

If AI is perceived as surveillance rather than operational support, adoption can stop even when the technology is sound.

Cybersecurity by design

Every connected asset expands the attack surface. Security must be embedded continuously across acquisition, integration, and execution layers.

Where smart factory architecture actually produces ROI

Value is created when insight changes behavior, execution, and outcome. The highest-return use cases are not interesting because they are fashionable. They matter because they connect data to action.

Potential downtime reduction when predictive indicators are operationalized.

Potential annual savings per line in the right failure-prone environment.

Potential scrap reduction when process deviation triggers corrective action.

Typical ROI range when deployments are bounded, governed, and execution-led.

Economic reality

Downtime at €5K–€20K per hour, scrap losses of 5–15%, and energy inefficiency of 10–25% create a compelling case for investment. But poor architecture leads to rework, integration debt, and vendor lock-in that quietly destroys the business case.

Implementation steps for a decision-driven factory

The path forward is not to buy more tools. It is to design the minimum viable architecture that proves closed-loop value quickly and can be repeated without redesign.

- Start with a business-critical failure mode where downtime, scrap, or energy loss is already visible and measurable.

- Model the context layer early by connecting telemetry to production order, material, shift, and maintenance data.

- Define the action pathway before deploying AI, including which system will execute or route the decision.

- Design for trust with explainability, confidence signals, escalation logic, and human override where appropriate.

- Instrument feedback so every intervention can be assessed for outcome quality and learning value.

- Standardize the replication model so a second plant is a deployment exercise, not a redesign program.

Executive self-diagnosis

Ask your team five blunt questions:

- Are we acting on data, or just viewing it?

- Can our system make governed decisions autonomously, or does everything depend on human interpretation?

- Can we scale the architecture across plants without redesign?

- Do operators, maintenance teams, and management all trust the outputs for their own reasons?

- Are we building enduring capability, or collecting disconnected tools?

Enhanced Full Blog Text — Board-Ready Report Format

Open the full management-report version

Smart Factories Aren’t Smart (Yet)

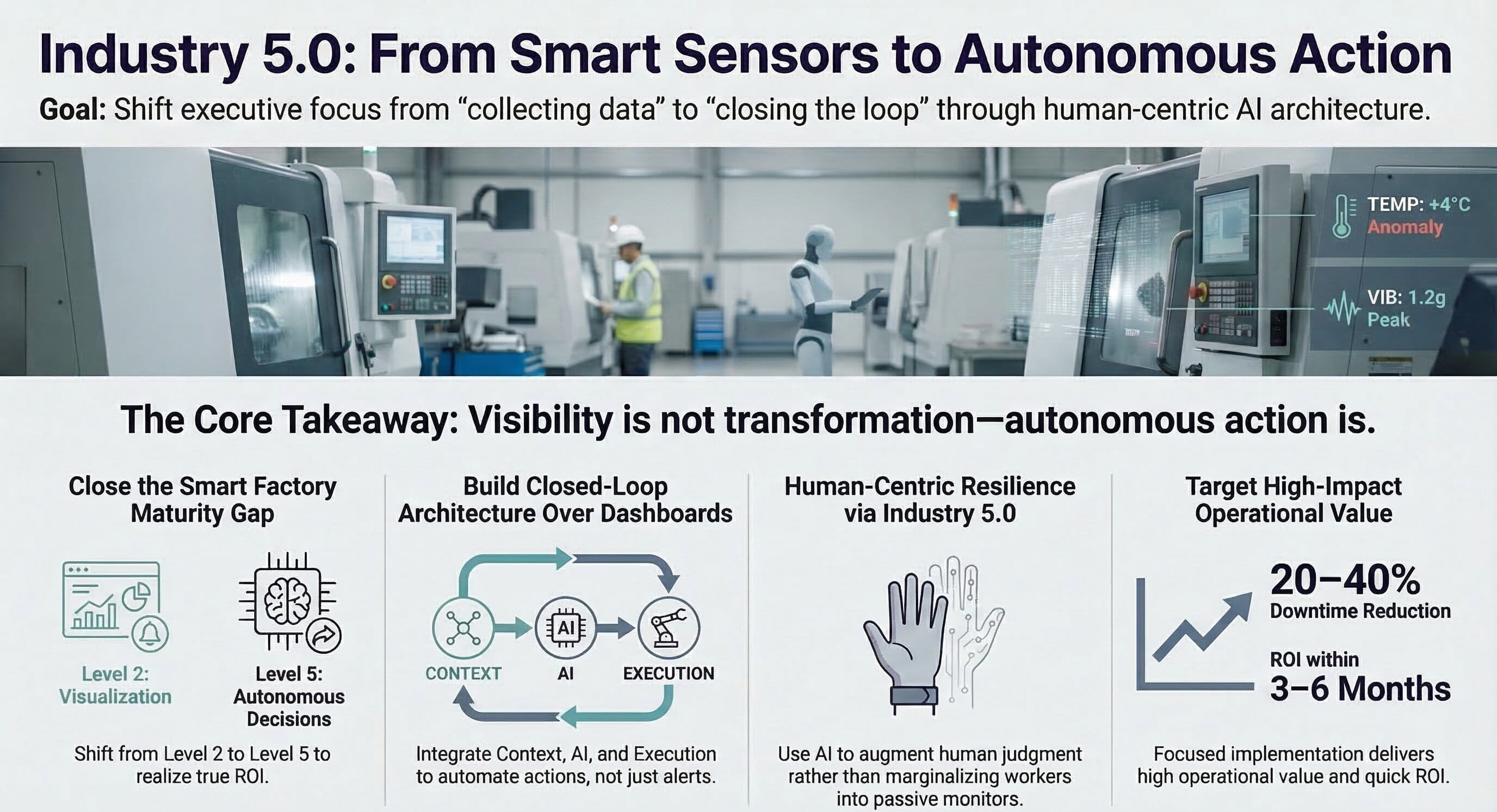

Why AI + IoT Projects Stall — and What It Actually Takes to Build a Decision-Driven Factory. The central lesson of this paper is straightforward: industrial data visibility does not equal industrial intelligence. Many factories have already invested in sensors, connected machines, dashboards, and analytics layers. Yet the operating model remains reactive because the enterprise has not built a decision system around the data. That gap between visibility and execution is where most smart factory initiatives lose momentum, trust, and return on investment.

1. Industrial Reality Check: The Factory That Knows Everything… and Still Gets Surprised

A €1.2M stamping press goes down at 02:17 AM. Not because of catastrophic failure. Not because of operator error. Because of a €200 bearing.

Now here is the uncomfortable part: the machine had 16 sensors installed, data was streaming continuously, and dashboards were live in the control room. And yet, nobody saw it coming.

If that sounds familiar, the issue is not a technology shortage. It is a decision problem. Most factories today are not lacking data. They are drowning in it. And still, downtime surprises the team, scrap fluctuates, operators rely on experience, and decisions happen after the fact.

If your system still needs a human to interpret every anomaly, it is not intelligent — it is expensive monitoring.

Many organizations proudly say, “We are a smart factory.” What they often mean is: we have sensors, we have dashboards, and we have visibility. That is not intelligence. That is instrumentation.

2. The Smart Factory Maturity Gap (Where Most Companies Are Stuck)

To define the reality more clearly, it helps to look at capability levels.

- Level 1: Data Collection

- Level 2: Visualization (Dashboards)

- Level 3: Insights & Alerts

- Level 4: Decision Support

- Level 5: Autonomous Decisions

Most factories operate between Level 2 and Level 3 while believing they are already approaching Level 4. That maturity gap is where ROI disappears, projects stall, and leadership loses confidence. Visibility is not transformation.

3. Why AI + IoT Is Not Your Problem

The industry narrative usually says: “IoT collects data. AI analyzes it.” That is technically correct, but architecturally incomplete.

What many systems actually look like is this: Sensors → Cloud → Dashboard → Human → Decision.

This design creates delayed response times, human bottlenecks, inconsistent decisions, and poor scalability.

A real system looks different: Sensors → Context → AI → Decision → Execution → Feedback.

This is a closed-loop system. Without it, AI becomes optional, insights remain unused, and systems do not improve. Dashboards do not scale. Decisions do.

4. What a Real AI + IoT Architecture Looks Like

This is where most implementations fail quietly, because teams skip architecture and jump to tools.

Layer 1: Data Acquisition. This includes PLC signals, sensor data such as temperature, vibration, and pressure, machine states, and operator inputs. It sounds simple, but it is not. Data arrives at different frequencies, in inconsistent formats, and is often incomplete. A vibration spike without context is just noise. Raw industrial data is not intelligence. It is unresolved ambiguity.

Layer 2: Contextualization. This is the most underrated layer. It combines machine data with production orders, material batches, shift schedules, and maintenance history. Without this layer, AI is guessing. This is why many AI projects work in pilots and fail in production: test environments are artificially clean. Reality is not.

Layer 3: Event Processing. The system must ask whether the pattern is normal, whether it has happened before, what the probability of failure is, and most importantly whether the situation requires action.

Layer 4: Decision Engine. This is the heart of transformation. A mature system can adjust machine parameters, schedule maintenance, reroute production, or trigger contextual alerts.

If your system generates alerts but not actions, you have automated anxiety — not operations.

Layer 5: Execution Layer. This is where many systems break. Decisions are not integrated, systems are siloed, and humans still execute manually. A real system turns Decision → API → MES/ERP → Action.

Layer 6: Feedback Loop. Now the system learns. Was the decision correct? Did it prevent failure? Did it improve output? Without this, the system does not improve and the AI stagnates.

5. Failure Anatomy: How Smart Factory Projects Collapse

Smart factory projects usually follow a recognizable path.

- Phase 1: Excitement. Sensors are installed, dashboards are created, and a pilot is launched. Everything looks promising.

- Phase 2: Early Wins. Insights are generated, minor improvements are seen, and internal buy-in increases.

- Phase 3: Scaling Attempt. Reality hits: data inconsistencies, integration challenges, and performance issues emerge.

- Phase 4: Resistance. Operators stop trusting the system, IT and OT misalign, and management questions ROI.

- Phase 5: Silent Failure. The system still exists, but nobody relies on it, decisions revert to manual processes, and the investment becomes sunk cost.

This is not the exception. This is the default outcome when execution architecture is missing.

6. Shop-Floor Reality: Where Theory Gets Destroyed

Factories are run by people under pressure, not by architecture diagrams.

Operators do not care about AI. They care about uptime, predictability, and hitting targets. If the system generates noise, creates confusion, or slows them down, it will be ignored. If your AI increases cognitive load, it is not intelligence — it is friction.

Maintenance teams do not want more alerts. They want fewer surprises, planned interventions, and predictable schedules.

Management wants ROI, scalability, and risk reduction.

One system must satisfy all three groups. Most do not.

7. Multi-Plant Scaling: Where Architecture Is Tested

A system that works in one plant often fails in five. Why? Because of machine variability, process differences, operator behavior, and infrastructure inconsistencies.

Without standardization, scaling becomes reinvention. Now the organization needs role-based access, clarity on data ownership, and cross-site consistency. It must also handle different latency conditions, connectivity realities, and edge requirements. Scaling is not deployment. It is architecture replication.

8. Governance, Security & European Reality

This is where many global solutions fail.

GDPR matters because machine data can include operator behavior and shift patterns. That means data handling, storage, and access control matter materially.

Works council considerations matter because AI can be perceived as surveillance. If that concern is ignored, adoption fails and rollout stops. In Germany, adoption is often blocked not by technology, but by trust.

Cybersecurity matters because more connected devices mean more risk. Digitization expands the attack surface. Security therefore must be embedded, continuous, and architected from the start.

9. Where AI + IoT Actually Deliver ROI

To cut through hype, value creation comes from execution, not insight alone.

- Predictive Maintenance: 20–40% downtime reduction and €200K–€500K annual savings per line.

- Adaptive Quality Control: 10–18% scrap reduction and fewer manual inspections.

- Energy Intelligence: Faster identification of inefficiencies and reduced operational cost.

- Production Optimization: Dynamic scheduling and adaptive workflows.

The pattern is simple: execution, not insight, is what produces measurable return.

10. Economic Reality: What This Means Financially

The cost of doing nothing is not abstract. A typical plant may face downtime of €5K–€20K per hour, scrap losses of 5–15%, and energy inefficiency of 10–25%.

In a focused implementation, ROI can arrive in 3–6 months through measurable cost savings and greater operational stability. The hidden cost, however, comes from poor architecture: rework, integration costs, and vendor lock-in.

The most expensive AI project is not the one that fails — it is the one that almost works.

11. The Future: From Smart to Autonomous

Manufacturing is moving into a new phase.

- Visibility

- Insight

- Decision Systems

- Autonomous Systems

Factories will move from reactive to predictive to autonomous. The winners will be the organizations that design the architecture early, rather than investing primarily in dashboards.

12. Decision Framework: How to Evaluate Your Approach

Executives should ask direct questions.

- Can the system act automatically?

- Who owns the data?

- Can the design scale across plants?

They should also watch for red flags: dashboard-heavy strategies, no edge capability, pilot-first thinking, and unclear governance. If the architecture looks deceptively simple, it probably will not scale.

13. Executive Summary

Data is solved. Decisions are not. Architecture determines success. AI amplifies system design. Most failures are predictable before the rollout begins.

14. Self-Diagnosis (Your Real Conversion Trigger)

Ask honestly:

- Are we acting on data — or just viewing it?

- Can our system make decisions autonomously?

- Can we scale without redesign?

- Do we trust our AI outputs?

- Are we building capability — or collecting tools?

If multiple answers are uncomfortable, then the organization does not have a technology issue. It has an architecture problem.

15. Final Thought

If the factory still depends on experienced operators to compensate for system gaps, then the transformation has not reduced risk. It has redistributed it.

And that is where most smart factory journeys quietly fail.

Anika aktualisiert am 19 Feb 2026, 08:15AM

The distinction between instrumentation and intelligence is exactly the conversation many plants still avoid. We invested early in dashboards, but until decisions were tied into maintenance workflows, none of it changed operational behavior.Thomas aktualisiert am 19 Feb 2026, 10:02AM

Completely agree. We saw the same thing in an automotive environment. Alerts only became useful once they were linked to work orders and escalation logic instead of sitting in a monitoring screen.Naveen aktualisiert am 19 Feb 2026, 01:20PM

The contextualization layer is the most valuable point in this piece. A temperature rise means nothing without batch, shift, machine state, and maintenance history. Many AI projects fail because the model sees signals but not operating reality.Julia aktualisiert am 20 Feb 2026, 09:05AM

What stood out to me is the phrase “automated anxiety.” That is precisely what some predictive maintenance systems create when they generate alerts without confidence scoring, root-cause hints, or action pathways.Markus aktualisiert am 20 Feb 2026, 11:11AM

Yes, and that is where operator trust collapses. Once teams experience false urgency a few times, they start ignoring the system entirely.Isha aktualisiert am 20 Feb 2026, 03:42PM

The multi-plant scaling section is highly relevant. Our pilot worked well in one site, but standardizing tags, equipment models, and access rules across plants turned out to be the actual project.Daniel aktualisiert am 21 Feb 2026, 08:30AM

Strong article. The governance discussion is especially important for European manufacturers. In Germany, works council alignment and transparency on operator-related data are not side issues—they are core design requirements.Katharina aktualisiert am 21 Feb 2026, 09:55AM

Exactly. Projects often frame this as a technical rollout, but trust architecture is just as important as data architecture.Rohit aktualisiert am 21 Feb 2026, 02:18PM

The closed-loop model here is useful because it forces teams to ask whether the system can actually execute anything. If the answer is no, then most of the “AI transformation” language is premature.Lena aktualisiert am 22 Feb 2026, 10:06AM

I also appreciated the financial framing. The hidden cost is rarely the failed pilot. It is the near-successful solution that creates integration debt and still never becomes operational standard.Arvind aktualisiert am 22 Feb 2026, 12:21PM

Well said. Those almost-working systems are the hardest to retire because leadership remembers the demo, not the operational drag.Felix aktualisiert am 23 Feb 2026, 08:48AM

The self-diagnosis questions at the end are practical. They are simple enough for executive teams but still expose whether a plant is building real capability or just accumulating tools.