Too many manufacturers have increased instrumentation without improving decision quality. More telemetry has not automatically translated into better plant performance.

Industrial IoT is moving from a connectivity project to an operational architecture discipline spanning data, security, integration, governance, and scale.

The future belongs to factories that shorten decision loops, embed intelligence into workflows, and treat industrial data as a strategic asset.

Factories are collecting more data than ever, yet many still operate with fragmented architecture, weak context, and limited operational intelligence.

The five foundations that separate smart factories from data graveyards

Industrial IoT has reached an awkward maturity point. The vocabulary is everywhere — connected assets, predictive maintenance, digital twins, AI, autonomous production, real-time visibility — but inside many plants, production, maintenance, quality, and engineering teams are still fighting the same old operational battles through disconnected systems and delayed insight.

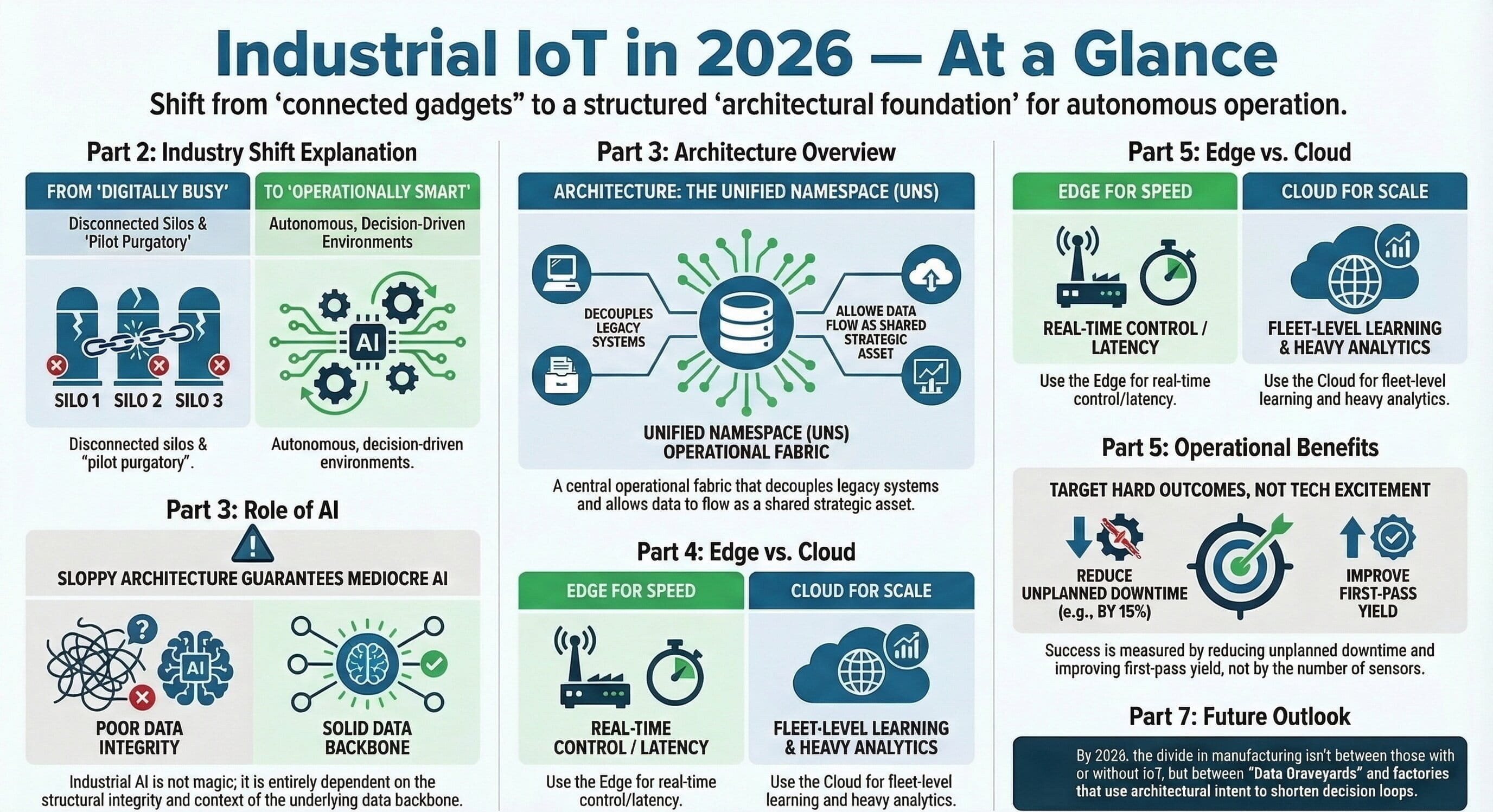

The problem is not a lack of ambition, and it is not a lack of technology. Sensors are cheaper, connectivity is easier, edge devices are stronger, cloud platforms are mature, analytics engines are powerful, and industrial AI has moved beyond novelty. The real constraint is architecture quality. Many factories are digitally busier, but not operationally smarter. Industrial IoT becomes expensive instrumentation layered on top of organizational silos and brittle integration instead of becoming the backbone for faster, better plant decisions.

That is why winning manufacturers in 2026 are no longer treating Industrial IoT as a side project owned by innovation teams. They are treating it as operational infrastructure: a system designed to connect outcomes, industrial data, cyber resilience, legacy integration, and scale into a coherent foundation for increasingly adaptive operations.

1. Outcome-first design

Anchor every architecture decision to a measurable plant objective such as downtime, throughput, yield, energy, or root-cause speed.

2. Strategic data systems

Move beyond raw signal capture and build context-rich industrial data models that support action across the enterprise.

3. Security by design

Treat cyber risk as operational risk and embed trust boundaries, access control, and lifecycle governance from the beginning.

4. Integration architecture

Use event-driven, standards-based integration patterns to connect legacy assets and modern platforms without creating new spaghetti.

Want to listen instead?

Use the audio briefing to review the strategic foundations in a portable format for leadership teams, architects, and plant stakeholders.

Want to watch the 2026 Industrial IoT perspective?

This embedded video can be used in the same blog page for teams that want a faster executive walkthrough before reading the full article.

A board-level summary of the Industrial IoT challenge

Start with operational outcomes, not technology excitement

The first mistake in many Industrial IoT programs appears in the first workshop. The conversation quickly gravitates toward technology: which sensors to install, which platform to use, which cloud to select, which dashboards to build, which AI tools to test. These sound like practical questions, but they often signal a deeper failure. The initiative is being shaped by available technology rather than business need.

The right starting point is much harder and much more useful: what operational problem is important enough to justify change? “Digital transformation” is not an operational goal. “Becoming smart” is not an operational goal. “Using AI” is not an operational goal. Plants improve when a specific industrial problem is identified, measured, addressed, and reduced.

Strong programs begin with concrete objectives: reduce unplanned downtime on critical assets, improve first-pass yield on a problematic line, cut energy waste in a defined process family, reduce mean time to root-cause analysis, improve OEE on a constrained production asset, increase maintenance planning accuracy, stabilize throughput variability, or reduce scrap caused by process drift. Once the problem is defined in operational terms, the architecture becomes easier to justify because the team knows what data matters, what systems must be involved, and which users need decisions rather than more screens.

This is also where many organizations get trapped in pilot purgatory. They launch a neat pilot on a handful of machines, generate a few visualizations, perhaps detect anomalies, and then stall because nothing was designed to prove business impact under real operating conditions. A serious program sets measurable targets from the start: reduce downtime by 15 percent on the bottleneck asset group, cut manual reporting effort by 40 percent, reduce energy cost per produced unit by 10 percent, or improve intervention timing enough to avoid a known failure class. These targets force discipline and make the project real.

There is also an uncomfortable but necessary truth here: not every problem needs IoT. Some factories digitize because digitization sounds strategic, even when the actual problem is poor process discipline, weak maintenance governance, bad master data, or inconsistent operating procedures. Industrial IoT is powerful, but it is not magic. It amplifies clarity when operational intent exists; it does not compensate for strategic confusion.

Treat industrial data as a strategic system, not a byproduct

Most factories do not suffer from a lack of data. They suffer from badly organized data ecosystems. Raw machine signals do not automatically create insight. Higher data volume is not intelligence, and a larger data lake is not the same thing as better decision-making. In fact, when the architecture is weak, more data often creates more confusion.

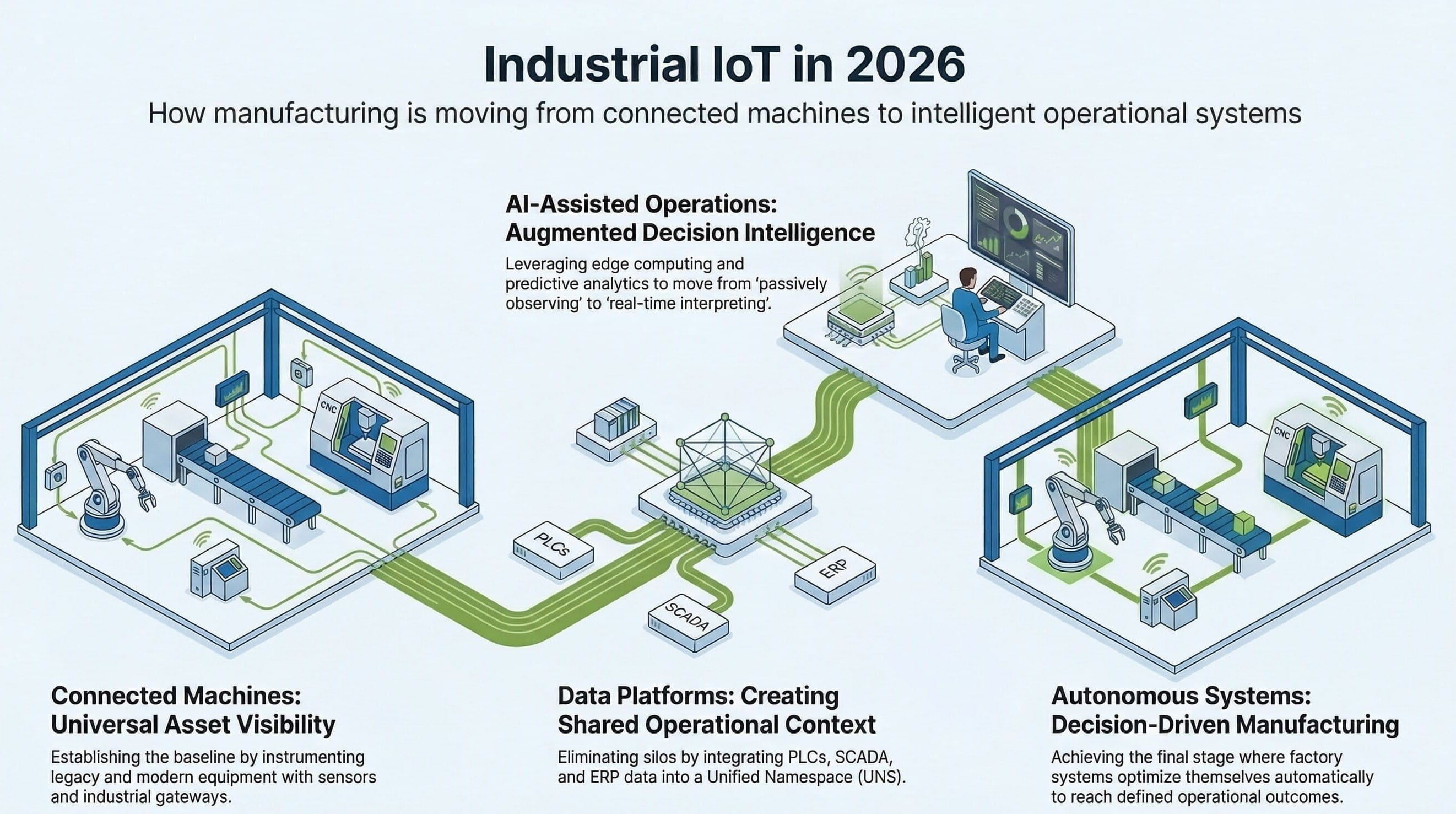

Industrial environments generate data across every layer of the business. PLCs emit machine states and control values. SCADA captures process behavior. MES records production execution events. Historians preserve time-series context. ERP tracks orders, inventory, and financial relationships. Quality systems hold inspection results and nonconformance records. CMMS or EAM platforms capture maintenance activities. Utilities, laboratories, logistics systems, and spreadsheets add even more fragments. Each system may have value in isolation, but together, without alignment, they create a maze.

That is why leading manufacturers in 2026 are rethinking industrial data architecture altogether. The old pattern — each system preserving its own local truth while teams manually stitch the picture together after the fact — is too slow, too brittle, and too dependent on heroic effort. Modern factories need a shared operational data model that allows events, states, asset context, and business relationships to move more fluidly.

This is where approaches such as Unified Namespace, event-driven architectures, industrial data fabrics, and edge-to-cloud streaming pipelines become strategically important. These are not fashionable labels for their own sake. They address the real disease: fragmentation. A mature Industrial IoT program does not merely ask how to collect data; it asks how industrial context can move across the enterprise in a way that supports action.

A vibration anomaly on a motor, for example, is weak information in isolation. It becomes meaningful when it is associated with asset identity, maintenance history, production schedule, quality deviations, operator actions, environmental conditions, and comparable patterns across similar assets. Industrial value comes from contextualized data, not isolated telemetry. Projects often drown because they instrument heavily and ingest everything without designing meaning.

The right industrial data strategy begins with decision loops. Which decisions need to be made faster, earlier, or more accurately? Which users need which type of context? Which events should trigger investigation, action, or automation? When these answers are clear, the data model can be designed to support them. Governance also matters: ownership, naming standards, semantic consistency, access control, data quality rules, retention logic, and lineage visibility are all essential. If sites use different naming for the same asset class, if tags are inconsistent, or if time synchronization is weak, analytics will degrade and trust will disappear.

From industrial data to operational intelligence

Build cybersecurity into the architecture from the beginning

Industrial cybersecurity remains one of the most underestimated aspects of Industrial IoT, which is remarkable because connected operations without security discipline are an open invitation to disruption. Every connected asset expands the attack surface. Every gateway, edge device, API, remote access path, historian connection, vendor integration, or cloud synchronization creates exposure.

The old mental split — OT as reliability-critical and IT as security-critical — no longer survives connected operations. Once industrial systems are linked, cyber risk becomes operational risk. Downtime, quality incidents, safety exposure, and business interruption are all on the line. Many industrial assets were never designed for hostile connectivity. They were built to run, not to defend themselves. Some depend on outdated operating systems, flat networks, weak credential practices, and vendor assumptions that do not hold against modern threat environments.

The threat landscape is also more aggressive. If plant operations can be interrupted, extorted, or manipulated, someone will eventually try. That is why cybersecurity cannot be treated as a bolt-on. It has to be a design principle. A zero-trust mindset is increasingly relevant: trust nothing implicitly, require continuous legitimacy for users, devices, applications, and connections, and govern access and data flow with explicit control points.

That said, industrial cybersecurity cannot simply copy enterprise patterns without adjustment. Industrial systems have unique latency, availability, protocol, and safety characteristics. Security controls must respect these realities while remaining meaningful. Architecture teams, plant engineers, security specialists, and operations leaders need to design together rather than tossing responsibility from one function to another.

Lifecycle reality matters too. A secure pilot can become an insecure production estate if nobody defines who patches what, how certificates are rotated, how assets are inventoried, how anomalous behavior is monitored, how vendor access is governed, and how decommissioning happens. Security is not a procurement checkbox. It is an operational capability. Manufacturers doing this well understand that trust is part of performance: a connected factory that cannot be defended is not advanced — it is fragile, and fragility scales badly.

Solve integration like an architect, not like a patchwork mechanic

Industrial reality is messy. Factories are full of history, constraints, vendor-specific protocols, partial upgrades, site-specific workarounds, tribal knowledge, and equipment that still creates value long after anyone expected it to remain in service. That is why integration is where industrial theory collides with industrial execution.

In most plants, the challenge is not collecting data from a modern sensor. The challenge is making old machines, local applications, SCADA systems, historians, MES platforms, ERP systems, quality tools, maintenance platforms, and cloud services work together in a way that is stable, understandable, and scalable. That is hard, but it is also where serious industrial value is unlocked.

Disconnected architectures create multiple problems at once. Teams waste time reconciling conflicting information. Root-cause analysis becomes slow and political because each function maintains its own version of truth. Automation opportunities remain limited because signals do not travel reliably across boundaries. New use cases become expensive because every integration is custom, fragile, and difficult to support.

That is why integration is not back-office plumbing to be tackled after the exciting work is done. It is part of the exciting work because it determines whether the factory behaves like a nervous system or just a collection of isolated digital organs. Good integration starts with a hard look at the current estate: what should be preserved, what is aging, where protocol boundaries live, which exchanges should move from batch to event-driven, where manual workarounds are hiding structural defects, and where contextual data is trapped.

A common mistake is forcing modern analytics or cloud tooling directly onto legacy environments without a translation layer. That usually creates brittle point-to-point interfaces, duplicated data transformations, unclear ownership, and escalating maintenance debt. A better pattern is to use middleware, edge integration layers, industrial message brokers, and standardized protocol gateways to decouple systems intelligently. Open standards such as MQTT and OPC UA help, but even standards are not magic. They still require naming models, semantic alignment, payload discipline, and governance. Otherwise the result is simply standardized chaos.

What mature integration looks like in practice

| Layer | Role in the architecture | Why it matters in 2026 |

|---|---|---|

| Asset & control layer | PLCs, sensors, machine states, process values, local control logic | Provides the raw operational signals, but needs context and governance before enterprise use |

| Edge integration layer | Protocol conversion, local filtering, buffering, secure connectivity, event publishing | Prevents legacy complexity from contaminating every new use case and keeps local decisions close to the process |

| Operational data distribution | Message brokers, event streams, shared operational context, reusable data contracts | Supports decoupled, scalable consumption across analytics, workflows, applications, and AI services |

| Enterprise applications | MES, ERP, QMS, CMMS/EAM, historians, planning, reporting | Turns machine events into business-relevant decisions, traceability, maintenance actions, and quality control |

| Analytics & intelligence | Dashboards, anomaly detection, optimization models, cross-site benchmarking, decision support | Creates value only when context, semantics, security, and integration are already working together |

Design for scale from day one or prepare to rebuild later

A pilot that works on one line proves almost nothing about whether the architecture can support a plant, a network of plants, or a global manufacturing footprint. This is one of the most expensive lessons in Industrial IoT. Early success often looks convincing until device counts multiply, data volume spikes, latency behavior changes, governance becomes messy, security varies by site, and the cost of onboarding each new asset remains stubbornly high.

This happens because scalability was treated as a future problem. That is a mistake. Scalability is not just about whether infrastructure can handle more data. It is about whether architecture, operating model, governance, integration patterns, and semantics can support growth without collapsing under their own weight.

In 2026, serious Industrial IoT programs are expected to support monitoring, cross-site analytics, AI model deployment, asset benchmarking, event orchestration, remote operations support, and increasingly autonomous workflows. Technically, that means architectures should be modular. Services should be decomposed enough to evolve independently. Data flows should support event-driven patterns instead of depending entirely on brittle batch transfers. Edge and cloud responsibilities should be deliberate rather than ideological: real-time local decisions belong close to the process, while fleet-level learning, historical optimization, and enterprise coordination may belong in centralized platforms.

Repeatability is equally important. Can a new line, site, or asset class be onboarded using templates, standard models, reusable data contracts, and proven deployment patterns? Or does every rollout require custom engineering and tribal heroics? That answer usually determines whether an initiative becomes strategic infrastructure or an endlessly expensive services exercise. Semantic scale matters too. If each site names assets differently, structures tags differently, and interprets shared metrics differently, enterprise insight stays weak no matter how much data is collected.

Finally, scale requires ownership. Who governs the platform? Who approves integrations? Who manages standards, edge deployments, site onboarding, support, cybersecurity consistency, and lifecycle updates? Without these answers, the architecture may scale technically while failing operationally. Mature manufacturers build with a long horizon in mind. They may start small in scope, but they do not think small in architecture.

How leaders translate Industrial IoT into measurable value

The strongest programs do not defend Industrial IoT with abstract innovation language. They prove it through operational economics. A well-structured initiative ties architecture choices to plant outcomes that matter financially and operationally: downtime, scrap, throughput, energy intensity, maintenance planning quality, reporting effort, and root-cause speed.

Example target for reducing unplanned downtime on bottleneck asset groups through earlier anomaly visibility and better intervention timing.

Illustrative reduction in repetitive reporting effort when machine, process, and business events are unified and contextualized.

Representative improvement when process-family visibility supports targeted energy optimization instead of broad guesswork.

Higher confidence and shorter investigation cycles when teams can correlate machine behavior, maintenance history, quality drift, and schedule context.

These are not vanity metrics. They are examples of the discipline required to prevent Industrial IoT from becoming a showroom project. Once target outcomes are explicit, the initiative can be governed as a business system rather than as a technology experiment.

A practical sequence for moving from connected assets to operational intelligence

| Phase | What to do | What success looks like |

|---|---|---|

| 1. Define the pain | Start with a hard operational problem tied to measurable plant loss: downtime, scrap, throughput variability, energy waste, or root-cause delay. | The initiative has an explicit business case, target metrics, and named stakeholders who own the outcome. |

| 2. Map decision loops | Identify which decisions need to become faster or more accurate, who makes them, what context is missing, and which events should trigger action. | The team knows which data matters and why, avoiding indiscriminate signal collection. |

| 3. Architect the data backbone | Design contextual data flow across PLC, SCADA, MES, historian, ERP, quality, and maintenance systems using governed models and reusable pipelines. | Industrial data becomes consumable, trustworthy, and scalable for applications, analytics, and AI services. |

| 4. Secure the estate | Embed segmentation, identity, least privilege, lifecycle governance, and monitored connectivity from day one. | Connected operations remain defensible as the attack surface expands. |

| 5. Test for scale early | Use pilots to validate value and repeatability, not just functionality. Stress onboarding, governance, site variation, and supportability. | The architecture can absorb more devices, sites, and use cases without a redesign. |

The future belongs to factories that can act, not just observe

The Industrial IoT conversation has matured. Connectivity alone is no longer transformation. The meaningful divide in 2026 is not between factories that have IoT and factories that do not. It is between factories that collect data and factories that operationalize intelligence; between factories that admire dashboards and factories that shorten decision loops; between factories that generate digital noise and factories that build digital architecture.

The organizations that will pull ahead are not the ones deploying the most sensors or buying the most fashionable platforms. They are the ones aligning Industrial IoT to hard operational outcomes, building disciplined industrial data systems, embedding cybersecurity into the foundation, integrating legacy and modern systems intelligently, and scaling with architectural intent. That is how industrial data stops being a reporting burden and becomes an operational asset. That is how connected operations begin to evolve toward increasingly autonomous ones.

The real promise of Industrial IoT has never been data collection. It has always been industrial decision advantage. The manufacturers that understand that are not building tech showcases. They are building the future factory.

Enhanced Full Blog Text — Board-Ready Report Format

Executive Summary. Industrial IoT has entered a decisive phase. The technology stack is no longer the limiting factor. Sensors are cheaper, connectivity is easier, edge devices are more capable, cloud platforms are mature, analytics engines are powerful, and industrial AI is no longer experimental. Yet many factories still struggle to convert connected data into meaningful operational advantage. The difference now lies in architecture quality: the ability to connect outcomes, data, security, integration, and scale into a system that supports faster, more accurate, and more trusted decisions across the plant.

Industrial IoT in 2026: The 5 Strategic Foundations Separating Smart Factories from Data Graveyards

Industrial IoT has reached an awkward stage in its maturity curve.

Almost every manufacturer now talks about connected assets, predictive maintenance, AI, digital twins, smart operations, autonomous production, or real-time visibility. On paper, the market looks advanced. The language is everywhere. The ambition is everywhere. The technology stack is broader and more accessible than ever. Sensors are cheaper. Connectivity is easier. Edge devices are more capable. Cloud platforms are mature. Analytics engines are powerful. Industrial AI is no longer a science experiment.

And yet, inside many factories, the reality is far less impressive.

Production teams still chase issues through disconnected systems. Maintenance teams still rely on reactive workflows. Quality teams still investigate defects after the fact. Engineering teams still struggle to correlate machine behavior, process drift, and business outcomes. Leaders still sit in front of dashboards that display a lot of data but do not meaningfully change plant performance.

That is the central contradiction of Industrial IoT in 2026.

Factories are collecting more data than ever, but many are still operating with limited context, fragmented architecture, and weak decision loops. They are digitally busier, not always operationally smarter. In too many cases, Industrial IoT has become an expensive layer of instrumentation sitting on top of the same old organizational silos and technology fragmentation.

This is why so many initiatives stall. Not because the vision is wrong, and not because the technology is useless, but because the architecture behind the initiative is weak.

Industrial IoT success does not come from buying a platform, deploying a pilot, or installing sensors on a few machines. It comes from designing a system that connects operational objectives, industrial data, cyber resilience, legacy integration, and scalability into one coherent digital foundation.

The manufacturers winning in 2026 understand this. They are no longer treating Industrial IoT as a side project owned by innovation teams. They are treating it as operational infrastructure. They are not chasing visibility for its own sake. They are building the technical and organizational backbone required to move from raw machine data to intelligent, adaptive operations.

That is the real shift underway.

The conversation is no longer just about connecting machines. It is about creating factories that can sense, interpret, decide, and improve with increasing levels of autonomy. It is about moving from dashboards to action, from isolated pilots to enterprise architecture, and from digital enthusiasm to measurable industrial performance.

To get there, five strategic foundations matter more than anything else.

1. Start with Operational Outcomes, Not Technology Excitement

This is where most Industrial IoT initiatives go wrong from day one.

The first discussion often revolves around technology. Which sensors should be installed? Which platform should be used? Which cloud should host the data? Which dashboards should be built? Which AI tools should be tested? These questions sound practical, but they are often symptoms of a deeper problem: the initiative is being shaped by available technology rather than business need.

That is backwards.

The right starting point is brutally simple: what operational problem is important enough to justify change?

If the answer is vague, the project is already in trouble. “Digital transformation” is not an operational goal. “Becoming smart” is not an operational goal. “Using AI” is definitely not an operational goal. These are slogans. Plants do not improve because of slogans. They improve because a hard industrial problem is identified, measured, addressed, and reduced.

The strongest Industrial IoT programs begin with a concrete operational outcome. Reduce unplanned downtime on high-value assets. Improve first-pass yield on a line with recurring quality losses. Cut energy waste across a specific process family. Reduce mean time to root-cause analysis when performance drops. Improve OEE on a constrained production asset. Increase maintenance planning accuracy. Stabilize throughput variability. Reduce scrap caused by process drift. That is real ground to stand on.

Once the operational problem is clearly defined, the architecture becomes easier to justify. You know what data matters. You know which systems need to be involved. You know where instrumentation gaps exist. You know which users need decisions, not just which users need screens.

This is also the point where many companies fall into pilot purgatory.

They launch a neat pilot on a handful of machines, generate a few visualizations, maybe even detect some anomalies, and then stall. Nothing scales. Nothing gets embedded into operations. Nothing changes structurally. The pilot becomes a showroom, not a transformation mechanism.

Why does that happen? Because the pilot was designed to prove technology, not to prove business impact under real operating conditions.

A serious Industrial IoT initiative defines success in operational terms from the start. It sets measurable targets. Reduce downtime by 15 percent on the bottleneck asset group. Cut manual reporting effort by 40 percent. Reduce energy cost per produced unit by 10 percent. Improve maintenance intervention timing enough to avoid a defined class of failure. Those targets force discipline. They make the project harder, but they also make it real.

There is another uncomfortable truth here. Not every problem needs IoT. Some factories digitize simply because digitization sounds strategic. That is lazy thinking. If the root cause is poor process discipline, weak maintenance governance, bad master data, or inconsistent operating procedures, connecting more sensors may not help much. Industrial IoT is powerful, but it is not magic. It amplifies clarity where operational intent exists. It cannot compensate for strategic confusion.

The manufacturers that get this right do something deceptively simple: they translate plant pain into architecture decisions. They do not start with gadgets. They start with consequences. They know which assets matter, which losses matter, which decisions matter, and which metrics truly indicate business value. Everything else follows from that.

2. Treat Industrial Data as a Strategic System, Not a Byproduct

Most factories do not suffer from a lack of data. They suffer from badly organized data ecosystems.

This is the second major reason Industrial IoT programs disappoint. Companies assume that once machine data is collected, value will naturally emerge. It does not. Raw signals are not insight. Data volume is not intelligence. A larger data lake is not the same thing as better decision-making. In fact, without a sound data architecture, more data often creates more confusion.

Industrial environments generate data across every layer of the operation. PLCs emit machine states and control values. SCADA systems capture process behavior. MES records production execution events. historians preserve time-series context. ERP stores order, inventory, and financial relationships. Quality systems maintain inspection results and nonconformance records. CMMS or EAM platforms capture maintenance activities. Laboratories, utilities, logistics systems, and even spreadsheets add more fragments to the picture.

Each system may be valuable on its own. Together, without alignment, they become a maze.

This is why industrial leaders in 2026 are rethinking data architecture altogether. The old pattern of letting every system manage its own local truth and then manually stitching information together after the fact is too slow, too brittle, and too dependent on heroics. Modern factories need a shared operational data model that allows events, states, and business context to move more fluidly.

That is where concepts like Unified Namespace, event-driven architectures, industrial data fabrics, and edge-to-cloud streaming pipelines have become strategically important. Not because they are fashionable terms, but because they address the actual disease: fragmentation.

A mature Industrial IoT program does not just ask, “How do we collect data?” It asks, “How does industrial context move across the enterprise in a way that supports action?” That is a very different question.

For example, a vibration anomaly on a motor is not particularly meaningful in isolation. It becomes meaningful when associated with asset identity, maintenance history, production schedule, quality deviations, operator actions, environmental conditions, and similar patterns observed across comparable assets. In other words, industrial value comes from contextualized data, not isolated telemetry.

This is where too many projects drown. They instrument heavily, ingest everything, and then wonder why the output is weak. The answer is simple: they collected signals without designing meaning.

The right industrial data strategy begins by identifying the critical decision loops in the business. Which decisions should be made faster, earlier, or more accurately? Which users need what kind of context? Which events must trigger investigation, action, or automation? Once those answers are clear, the data model can be designed accordingly.

There is also a governance angle that cannot be ignored. Industrial data needs ownership, naming standards, semantic consistency, access rules, quality controls, retention logic, and lineage visibility. If different sites describe the same asset class differently, or if tags are inconsistent, or if time synchronization across systems is unreliable, analytics will degrade and trust will evaporate. Once plant teams stop trusting the data, the initiative starts rotting from the inside.

The strongest manufacturers now treat industrial data the way serious software organizations treat platform engineering: as a shared strategic capability. They invest in reusable data pipelines, standardized event structures, governed asset models, and real-time distribution patterns that allow applications, analytics, and AI services to consume operational data consistently.

This matters even more as industrial AI grows. AI models are not clever enough to rescue chaotic input ecosystems. If the underlying data is delayed, inconsistent, context-poor, or semantically unstable, model performance will be unreliable. A sloppy data backbone guarantees mediocre AI no matter how polished the demo looks.

The companies pulling ahead understand that Industrial IoT is not fundamentally a sensor problem. It is a data architecture problem. Sensors are easy. Building a trustworthy operational data system that can support analytics, automation, optimization, and cross-functional decision-making is the hard part. That is why it matters.

3. Build Cybersecurity into the Architecture from the Beginning

Industrial cybersecurity is still one of the most underestimated aspects of Industrial IoT.

That is absurd, because connected operations without security discipline are basically an invitation to disruption.

Every additional connected asset expands the attack surface. Every gateway, edge device, API, remote access path, historian connection, vendor integration, or cloud synchronization introduces new exposure. Many plants still operate with a dangerous mental split: OT is viewed as reliability-critical and IT is viewed as security-critical. That separation is now obsolete. Once industrial systems are connected, cyber risk becomes an operational risk. Downtime, quality incidents, safety exposure, and business interruption all sit on the line.

In traditional industrial environments, many assets were never designed for hostile connectivity. They were built to run, not to defend themselves. Some were designed decades ago. Many still depend on outdated operating systems, flat networks, weak credential practices, and vendor assumptions that do not survive contact with modern threat landscapes. Adding connectivity to such systems without redesigning trust boundaries is reckless.

The threat environment is also harsher now. Ransomware groups understand the economics of manufacturing disruption. Supply chain attacks increasingly exploit third-party pathways. Remote access tools, unmanaged devices, weak segmentation, stale firmware, and shared passwords continue to create obvious openings. If plant operations can be interrupted, extorted, or manipulated, someone will eventually try.

This is why cybersecurity can no longer be treated as a bolt-on. It has to be a design principle.

The zero-trust model is especially relevant in industrial environments. The basic idea is simple: trust nothing implicitly. Every device, user, application, and connection must continuously prove legitimacy before access is granted or maintained. In OT-heavy environments, that requires adaptation, but the principle remains sound. Identity matters. Segmentation matters. Least privilege matters. Monitoring matters. Secure update processes matter. Device inventory matters. Vendor access governance matters.

And yes, this makes projects harder. Good. They should be harder. Connecting machines that control physical processes should not be easy in a careless way.

One of the biggest mistakes manufacturers make is assuming standard enterprise security controls are enough. They are not. Industrial systems have unique latency, availability, protocol, and safety characteristics. Security measures must respect those realities without becoming decorative. That means architecture teams, plant engineers, security specialists, and operations leaders need to design together rather than passing responsibility around like a hot potato.

Another frequent mistake is ignoring lifecycle reality. A secure pilot can turn into an insecure production environment if no one defines who patches what, how certificates are rotated, how assets are inventoried, how anomalous behavior is detected, how decommissioning happens, and how third-party integrations are governed over time. Security is not a procurement checkbox. It is an operational capability.

The manufacturers doing this well treat cybersecurity as a core component of Industrial IoT value creation. They understand that trust is part of performance. A connected factory that cannot be defended is not advanced. It is fragile.

And fragility scales badly.

As industrial operations become more dependent on real-time data exchange, autonomous workflows, and AI-assisted actions, the consequences of compromised trust grow. A manipulated data stream can distort decisions. A compromised edge node can create blind spots. Weak access control can turn convenience into exposure. In industrial environments, these are not abstract IT problems. They are production risks.

The winners in 2026 are not the ones with the flashiest dashboards. They are the ones that can digitize boldly without turning their operations into soft targets.

4. Solve Integration Like an Architect, Not Like a Patchwork Mechanic

If there is one brutal reality that separates industrial theory from industrial execution, it is this: factories are messy.

They are full of history, constraints, legacy systems, vendor-specific protocols, partial upgrades, site-specific workarounds, tribal knowledge, and equipment that still creates value long after anyone expected it to remain in service. Anyone talking about Industrial IoT as if factories can simply rip out the old and replace everything with a clean greenfield stack is not dealing with the real world.

Integration is where most of the pain lives.

In many plants, the challenge is not getting data out of a modern sensor. The challenge is making old machines, local applications, SCADA systems, historians, MES platforms, ERP systems, quality tools, maintenance platforms, and cloud services work together in a way that is stable, understandable, and scalable. That is hard. It is also where most serious industrial value is unlocked.

A disconnected architecture creates several problems at once. Teams waste time reconciling inconsistent information. Root-cause analysis becomes slow and political because every function has its own version of truth. Automation opportunities remain limited because signals do not travel reliably across system boundaries. New applications become expensive to deploy because every integration is custom and fragile.

This is why integration should not be treated as plumbing work done after the exciting parts. It is one of the exciting parts, because it determines whether the system can act as a factory-wide nervous system or just a collection of isolated digital organs.

Good integration architecture starts with a hard look at the estate that already exists. What systems are stable and worth preserving? What systems are critical but aging? Where are the major protocol boundaries? Which data exchanges are batch-based but should be event-driven? Which business processes require synchronous coordination and which can tolerate asynchronous flows? Where is contextual data trapped? Where are the manual workarounds masking structural defects?

These questions matter because the wrong integration strategy creates technical debt immediately.

A common mistake is forcing modern analytics or cloud tools directly onto legacy environments without a translation layer. That often produces brittle point-to-point interfaces, duplicated data transformations, unclear ownership, and escalating maintenance burden. It works just enough to get approved, then becomes a nightmare to operate.

A better pattern is to use middleware, edge integration layers, industrial message brokers, and standardized protocol gateways to decouple systems intelligently. The point is not to hide legacy complexity completely. The point is to prevent that complexity from contaminating every new use case.

Open standards matter enormously here. Protocols such as MQTT and OPC UA, when used properly, can help create a more interoperable ecosystem. But even standards are not magic. You still need naming models, semantic alignment, payload discipline, event structures, and governance around how information is published and consumed. Otherwise, you just get standardized chaos.

This is also where the concept of a Unified Namespace has gained traction in serious industrial circles. Not because it solves everything by itself, but because it encourages a publish-subscribe model where plant and enterprise systems can consume shared operational context instead of constantly exchanging brittle custom integrations. It shifts the architecture from isolated application coupling toward a more event-driven operational fabric.

That shift matters because it changes how factories evolve. New analytics applications, AI services, visualization tools, alerts, and workflows can be added with less integration pain if the underlying information model is already flowing through a reusable data distribution layer. Without that, every new use case becomes another project, another connector, another exception, and another pile of avoidable complexity.

The manufacturers getting this right understand that integration is not a side activity. It is the mechanism by which industrial intelligence becomes usable across the organization. They do not romanticize greenfield thinking. They build realistic architectures that respect the life cycle of industrial assets while still creating a path toward modern digital operations.

That is what adults do. Everyone else builds demo stacks.

5. Design for Scale from Day One or Prepare to Rebuild Later

A pilot that works on one line proves almost nothing about whether the architecture can support a plant, a network of plants, or a global manufacturing footprint.

This is one of the most expensive lessons in Industrial IoT. Teams celebrate early success, then discover that the original solution cannot handle broader rollout. Data volume spikes. Device counts multiply. Latency behavior changes. Governance becomes messy. Site-specific variations break assumptions. The cost of onboarding each additional asset stays too high. Security controls become inconsistent. Support becomes chaotic. And suddenly the pilot that looked promising becomes the blueprint for a future rebuild.

This happens because scalability was treated as a later problem.

That is a mistake. Scalability is not just about whether infrastructure can handle more data. It is about whether the architecture, operating model, governance approach, and integration patterns can support growth without collapsing under their own weight.

In 2026, serious Industrial IoT programs are expected to support not just monitoring, but cross-site analytics, AI model deployment, asset benchmarking, event orchestration, remote operations support, and increasingly autonomous workflows. That means scale has to be considered in technical, organizational, and semantic terms.

Technically, the architecture should be modular. Services should be decomposed enough to evolve independently. Data flows should support event-driven patterns rather than relying entirely on brittle batch transfers. Edge and cloud responsibilities should be defined rationally. Real-time local decisions belong close to the process. Heavy analytics, historical optimization, fleet-level learning, and enterprise coordination may belong in centralized platforms. The trick is not choosing edge or cloud as if they are ideological camps. The trick is using both deliberately.

Scalability also depends on repeatability. Can a new line, site, or asset group be onboarded using templates, standard models, reusable data contracts, and proven patterns? Or does each rollout require custom engineering and tribal heroics? The answer to that question often determines whether an Industrial IoT initiative becomes strategic infrastructure or an endlessly expensive services exercise.

Semantic scale matters too. If each site uses different naming, different tag structures, different contextual conventions, and different interpretations of shared metrics, enterprise-level insight will stay weak no matter how much data is collected. A scalable industrial system needs shared information models that can tolerate local specificity without losing global comparability.

Operationally, scale requires ownership. Who governs the platform? Who approves new integrations? Who manages standards? Who owns edge deployments? Who supports site onboarding? Who ensures cybersecurity controls remain consistent? Who manages lifecycle updates? Without these answers, the architecture may scale in technology terms but fail in delivery terms.

A lot of organizations still think of scalability as a future luxury. That is sloppy thinking. The moment a pilot demonstrates value, scale becomes a business expectation. If the original design did not anticipate that reality, the program will either stall or require painful rework.

The strongest manufacturers now build with a long horizon in mind. They may start small in scope, but they do not think small in architecture. They use pilots to validate operational value and deployment patterns, not to excuse fragile designs. They deliberately test whether the solution can absorb more devices, more data, more sites, and more use cases. They stress not just functionality but operability.

That is what mature Industrial IoT looks like.

Conclusion: The Future Belongs to Factories That Can Act, Not Just Observe

The Industrial IoT conversation has matured. The era of treating connectivity alone as transformation is over.

In 2026, the real divide is not between factories that have IoT and factories that do not. It is between factories that collect data and factories that operationalize intelligence. Between factories that admire dashboards and factories that shorten decision loops. Between factories with digital noise and factories with digital architecture.

That divide will define the next decade of industrial competitiveness.

The winners will not be the organizations that deploy the most sensors or buy the most fashionable platforms. They will be the ones that align Industrial IoT with hard operational outcomes, build disciplined industrial data systems, embed cybersecurity into the foundation, integrate legacy and modern systems intelligently, and scale with architectural intent.

That is how factories move from fragmented visibility to coordinated action.

That is how industrial data stops being a reporting burden and starts becoming an operational asset.

And that is how manufacturers begin the transition from connected operations to increasingly autonomous ones.

The real promise of Industrial IoT has never been data collection. It has always been industrial decision advantage.

The companies that understand that are not building tech showcases.

They are building the future factory.

Arvind aktualisiert am 19 Feb 2026, 08:35AM

The point about factories becoming “digitally busier, not operationally smarter” is painfully accurate. We have dashboards in three systems, but operators still need hallway conversations to understand what is actually happening on the line.Lena aktualisiert am 19 Feb 2026, 10:14AM

Same here. The breakthrough for us was not another dashboard, but aligning machine events with production context and maintenance history. Once the data started telling a complete story, teams actually trusted it.Markus aktualisiert am 19 Feb 2026, 01:20PM

The cybersecurity section is especially relevant. Too many programs still treat OT connectivity as an engineering convenience problem instead of a business continuity problem.Neha aktualisiert am 19 Feb 2026, 03:02PM

Exactly. Once remote access, edge gateways, and cloud sync are introduced, cyber posture becomes part of plant reliability. That shift still has not fully landed in many organizations.Daniel aktualisiert am 20 Feb 2026, 09:10AM

The integration argument is spot on. Most of the cost in our rollout was not sensors or analytics; it was reconciling PLC, MES, historian, quality, and ERP data without creating another custom spaghetti layer.Kavya aktualisiert am 20 Feb 2026, 11:48AM

That mirrors our experience. We moved to a brokered event model and it reduced onboarding friction for new use cases dramatically. The old point-to-point model looked cheap at first and became very expensive later.Tobias aktualisiert am 20 Feb 2026, 04:05PM

I appreciate the blunt line that not every problem needs IoT. In some cases, weak process discipline gets hidden behind “digital transformation” language, and the plant ends up automating confusion.Harish aktualisiert am 21 Feb 2026, 08:25AM

The pilot purgatory point should be mandatory reading for every manufacturing leadership team. We proved anomaly detection on one asset group, but scaling failed because naming, governance, and site standards were never designed.Julia aktualisiert am 21 Feb 2026, 10:41AM

That is the real lesson. A successful pilot should validate deployment patterns and business value, not just show that a visualization can be built.Sandeep aktualisiert am 21 Feb 2026, 02:30PM

The article does a good job connecting data architecture to AI readiness. Many companies want industrial AI outcomes while still feeding models delayed, inconsistent, context-poor input.Felix aktualisiert am 21 Feb 2026, 05:16PM

Well said. AI is not a rescue layer for bad operational data. If the semantic model is unstable, the model output becomes difficult to trust at scale.Anika aktualisiert am 22 Feb 2026, 09:00AM

The distinction between factories that observe and factories that act is the strongest part of the piece. That is exactly where the board conversation should be focused now.Rohit aktualisiert am 22 Feb 2026, 01:12PM

Would love to see a follow-up specifically on how to phase governance across multi-site deployments. The technical patterns are important, but platform ownership is where many enterprise rollouts start to wobble.Greta aktualisiert am 22 Feb 2026, 03:40PM

Agreed. Standards, onboarding templates, support ownership, and lifecycle management decide whether architecture becomes infrastructure or just another consulting program.